Archive

SQL Server 2017 In-Memory enhancements and improvements over previous versions

In-Memory tables were introduced in SQL Server 2014 and were also known as Hekaton tables. You can check my previous articles about In-memory tables for [SQL Server 2014] and [SQL Server 2016].

–> In-memory tables as new concept in SQL Server 2014/2016 had lot of limitations compared to normal Disk based tables. But with the new release of SQL Server 2017 some limitations are addressed and other features have been added for In-Memory tables. These improvements will enable scaling to larger databases and higher throughput in order to support bigger workloads. And compared to previous version of SQL Server it will be easier to migrate your applications to and leverage the benefits of In-Memory OLTP with SQL Server 2017.

–> I have collated all the major improvements here in the table below:

1. sp_spaceused is now supported for memory-optimized tables.

2. sp_rename is now supported for memory-optimized tables and natively compiled T-SQL modules.

3. CASE statements are now supported for natively compiled T-SQL modules.

4. The limitation of eight indexes on memory-optimized tables has been eliminated.

5. TOP (N) WITH TIES is now supported in natively compiled T-SQL modules.

6. ALTER TABLE against memory-optimized tables is now substantially faster in most cases.

7. Transaction log redo of memory-optimized tables is now done in parallel. This bolsters faster recovery times and significantly increases the sustained throughput of AlwaysOn Availability Group configuration.

8. Memory-optimized filegroup files can now be stored on Azure Storage. Backup/Restore of memory-optimized files on Azure Storage is supported.

9. Support for computed columns in memory-optimized tables, including indexes on computed columns.

10. Full support for JSON functions in natively compiled modules, and in check constraints.

11. CROSS APPLY operator in natively compiled modules.

12. Performance of B-tree (NonClustered) index rebuild for MEMORY_OPTIMIZED tables during database recovery has been significantly optimized. This improvement substantially reduces the database recovery time when NonClustered indexes are used.

Memory Optimized “Table Variables” in SQL Server 2014 and 2016

SQL Server 2014 provided you these new features to create [Memory Optimized Tables] and [Native Compiled Stored Procedures] for efficient and quick processing of data and queries which happens all in memory.

It also provided you one more feature to create Memory Optimized Table Variables, in addition to normal Disk Based Table Variables.

This new feature would provide you more efficiency in Storing, Retrieving and Querying temporary data from and in memory.

Normal Table Variables are created in tempdb and utilize it for their entire life. Now with these new Memory Optimized Table Variables they will become free from tempdb usage, relieve tempdb contention and reside in memory only till the scope i.e. batch of a SQL script or a Stored Procedure.

Let’s see how to use these and what performance gain you get out of these tables.

–> Enable Database for supporting Memory Optimized tables: To use this feature your Database should be associated with a FileGroup. So, let’s alter the database.

USE [TestManDB]

GO

-- Add the Database to a new FileGroup

ALTER DATABASE [TestManDB]

ADD FILEGROUP [TestManFG] CONTAINS MEMORY_OPTIMIZED_DATA

GO

ALTER DATABASE [TestManDB]

ADD FILE (

NAME = TestManDBFG_file1,

FILENAME = N'E:\MSSQL\DATA\TestManDBFG_file1' -- Put correct path here

)

TO FILEGROUP TestManFG

GO

Otherwise, while creating Memory Optimized objects you will get below error:

Msg 41337, Level 16, State 100, Line 1

Cannot create memory optimized tables. To create memory optimized tables, the database must have a MEMORY_OPTIMIZED_FILEGROUP that is online and has at least one container.

You cannot create a Memory Optimized Table Variable directly with DECLARE @TableVarName AS TABLE (…) statement. First you will need to create a Table Type, then based upon this you can create Tables Variables.

–> Create a Table TYPE [Person_in_mem]

CREATE TYPE dbo.Person_in_mem AS TABLE( BusinessEntityID INT NOT NULL ,FirstName NVARCHAR(50) NOT NULL ,LastName NVARCHAR(50) NOT NULL INDEX [IX_BusinessEntityID] HASH (BusinessEntityID) WITH ( BUCKET_COUNT = 2000) ) WITH ( MEMORY_OPTIMIZED = ON ) GO

The Memory Optimized Table Type should have an Index, otherwise you will see an error as mentioned below:

Msg 41327, Level 16, State 7, Line 27

The memory optimized table ‘Person_in_mem’ must have at least one index or a primary key.

Msg 1750, Level 16, State 0, Line 27

Could not create constraint or index. See previous errors.

Ok, now as we’ve created this table type, now we can create as many Table Variables based upon this.

–> Now, create a Table variable @PersonInMem of type [Person_in_mem] that is created above:

DECLARE @PersonInMem AS Person_in_mem -- insert some rows into this In-Memory Table Variable INSERT INTO @PersonInMem SELECT TOP 1000 [BusinessEntityID] ,[FirstName] ,[LastName] FROM [AdventureWorks2014].[Person].[Person] SELECT * FROM @PersonInMem GO

Here we successfully created a Table Variable, inserted records into it and retrieved same by the SELECT statement, and this all happened in memory.

Now how can we see we that how much benefits we got from this? What we can do is, we can create a separate Disk-Based Table Variable and do similar operation on it and compare the results by checking the Execution Plan.

–> Comparing performance of both In-Memory vs Disk-Based Table-Variables

– Enable the Actual Execution Plan and run below script to Create and Populate both:

1. In-Memory Table Variable

2, Disk-Based Table Variable

-- 1. In-Memory Table Variable DECLARE @PersonInMem AS Person_in_mem INSERT INTO @PersonInMem SELECT TOP 1000 [BusinessEntityID] ,[FirstName] ,[LastName] FROM [AdventureWorks2014].[Person].[Person] select * from @PersonInMem -- 2. Disk-Based Table Variable DECLARE @Person AS TABLE ( BusinessEntityID INT NOT NULL ,FirstName NVARCHAR(50) NOT NULL ,LastName NVARCHAR(50) NOT NULL ) INSERT INTO @Person SELECT TOP 1000 [BusinessEntityID] ,[FirstName] ,[LastName] FROM [AdventureWorks2014].[Person].[Person] select * from @Person GO

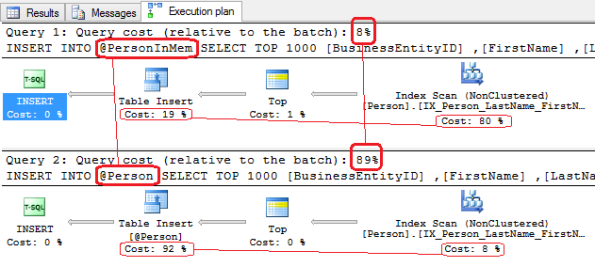

–> Now, check the Actual Execution Plan results below:

1. Check the Cost of INSERT operation with both the tables:

– It took only 8% cost to insert into In-memory Table Variable.

– But it took 89% cost to insert into a Disk-Based Table Variable.

> If You see the individual Operators in both the plans you will see that :

For @PersonInMem Table Variable the cost of INSERT was just 19% compared to the cost of INSERT for @Person Table Variable that was 92%.

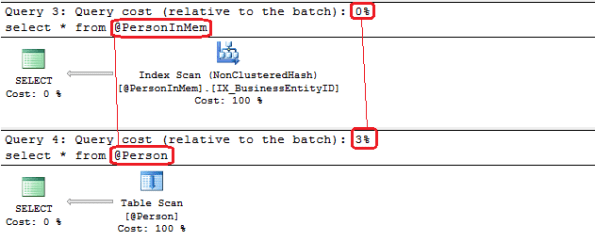

2. Check the Cost to SELECT/Retrieve rows both the tables:

– It took only 0% cost to retrieve rows from the In-memory Table Variable

– And it took 3% cost to retrieve rows from a Disk-Based Table Variable

This proves that the INSERT and SELECT operations with Memory Optimized table are way more faster that normal Disk-Based tables.

Thus, using Memory Optimized Table Variables will provide you better performance for storing temporary data within memory and process with in Stored Procedure or your T-SQL Scripts.

Update: Know more about In-Memory tables:

In-memory enhancements and improvements in SQL Server 2016

In-Memory tables were introduced in SQL Server 2014 and were also known as Hekaton tables. I’ve written previously about In-memory tables for SQL Server 2014 and you can check in my [previous posts] to know more about these type of tables with some Hands-on examples and demos.

–> In-memory tables as new concept in SQL Server 2014 had lot of limitations compared to normal tables. But with the new release of SQL Server 2016 some limitations are addressed and other features have been added for In-Memory tables. These improvements will enable scaling to larger databases and higher throughput in order to support bigger workloads. And compared to previous version of SQL Server it will be easier to migrate your applications to and leverage the benefits of In-Memory OLTP with SQL Server 2016.

–> I have collated all the major improvements here in the table below:

* Collation Support

1. Non-BIN2 collations in index key columns

2. Non-Latin code pages for (var)char columns

3. Non-BIN2 collations for comparison and sorting in native modules

–> You can check more about In-Memory tables for SQL Server 2016 in MSDN BoL [here].

Check the above details explained in the video below:

Memory Optimized Indexes | Hash vs Range Indexes – SQL Server 2014

In SQL Server 2014 for In-Memory tables there are lot of changes in DDLs compared with normal Disk Based Tables. In-Memory Tables related changes we’ve seen in previous posts, check [here]. Here we will see Memory Optimized Index related changes and few important things to take care before designing your Tables and Indexes.

–> Some of the main points to note are:

1. Indexes on In-Memory tables must be created inline with CREATE TABLE DDL script only.

2. These Indexes are not persisted on Disk and reside only in memory, thus they are not logged. As these Indexes are not persistent so they are re-created whenever SQL Server is restarted. Thus In-Memory tables DO NOT support Clustered Indexes.

3. Only two types of Indexes can be created on In-Memory tables, i.e. Non Clustered Hash Index and Non Clustered Index (aka Range Index). So there is no bookmark lookup.

4. These Non Clustered Indexes are inherently Covering, and all columns are automatically INCLUDED in the Index.

5. Total there can be MAX 8 Non Clustered Indexes created on an In-Memory table.

–> Here we will see how Query Optimizer uses Hash & Range Indexes to process query and return results:

1. Hash Indexes: are used for Point Lookups or Seeks. Are optimized for index seeks on equality predicates and also support full index scans. Thus these will only perform better when the predicate clause contains only equality predicate (=).

2. Range Indexes: are used for Range Scans and Ordered Scans. Are optimized for index scans on inequality predicates, such as greater than or less than, as well as sort order. Thus these will only preform better when the predicate clause contains only inequality predicates (>, <, =, BETWEEN).

–> Let’s check this by some hands-on code. We will create 2 similar In-Memory tables, one with Range Index and another with Hash Index:

-- Create In-Memory Table with simple NonClustered Index (a.k.a Range Index):

CREATE TABLE dbo.MemOptTable_With_NC_Range_Index

(

ID INT NOT NULL

PRIMARY KEY NONCLUSTERED,

VarString VARCHAR(200) NOT NULL,

DateAdded DATETIME NOT NULL

) WITH (

MEMORY_OPTIMIZED = ON,

DURABILITY = SCHEMA_AND_DATA

)

GO

-- Create In-Memory Table with NonClustered Hash Index:

CREATE TABLE dbo.MemOptTable_With_NC_Hash_Index

(

ID INT NOT NULL

PRIMARY KEY NONCLUSTERED HASH WITH (BUCKET_COUNT = 10000),

VarString VARCHAR(200) NOT NULL,

DateAdded DATETIME NOT NULL

) WITH (

MEMORY_OPTIMIZED = ON,

DURABILITY = SCHEMA_AND_DATA

)

GO

–> Now we will Insert about 10k records on both the tables, so that we have good numbers of records to compare:

DECLARE @i INT = 1

WHILE @i <= 10000

BEGIN

INSERT INTO dbo.MemOptTable_With_NC_Range_Index

VALUES(@i, REPLICATE('a', 200), GETDATE())

INSERT INTO dbo.MemOptTable_With_NC_Hash_Index

VALUES(@i, REPLICATE('a', 200), GETDATE())

SET @i = @i+1

END

–> Now check the Execution Plan by using equality Operator (=) on both the tables:

SELECT * FROM MemOptTable_With_NC_Hash_Index WHERE ID = 5000 -- 4% SELECT * FROM MemOptTable_With_NC_Range_Index WHERE ID = 5000 -- 96%

You will see in the Execution Plan image below that Equality Operator with Hash Index Costs you only 4%, but Range Index Costs you 96%.

–> Now check the Execution Plan by using inequality Operator (BETWEEN) on both the tables:

SELECT * FROM MemOptTable_With_NC_Hash_Index WHERE ID BETWEEN 5000 AND 6000 -- 99% SELECT * FROM MemOptTable_With_NC_Range_Index WHERE ID BETWEEN 5000 AND 6000 -- 1%

You will see in the Execution Plan image below that Inequality Operator with Range Index Costs you only 1%, but Hash Index Costs you 99%.

So, while designing In-Memory Tables and Memory Optimized Indexes you will need to see in future that how you will be going to query that table. It also depends upon various scenarios and conditions, so always keep note of these things in advance while designing your In-Memory Tables.

Update: Know more about In-Memory tables:

XTP (eXtreme Transaction Processing) with Hekaton Tables & Native Compiled Stored Procedures – SQL Server 2014

In my previous posts [this & this] I talked about creating Memory Optimized Database, how to create In-Memory Tables & Native Compiled Stored Procedures and what happens when they are created.

Here in this post we will see how FAST actually In-Memory tables & Native Compiled Stored Procedures are, when compared with normal Disk based Tables & Simple Stored Procedures.

I’ll be using the same [ManTest] database used in my previous posts, you can refer to the DDL script [here].

–> We will create:

1. One Disk based Table & one simple Stored Procedure which will use this Disk based Table.

2. One In-Memory Table & one Native Compiled Stored Procedure which will use this In-Memory Table.

1. Let’s first create a Disk based Table and a normal Stored Procedure:

USE [ManTest]

GO

-- Create a Disk table (non-Memory Optimized):

CREATE TABLE dbo.DiskTable

(

ID INT NOT NULL

PRIMARY KEY,

VarString VARCHAR(200) NOT NULL,

DateAdded DATETIME NOT NULL

)

GO

-- Create normal Stored Procedure to load data into above Table:

CREATE PROCEDURE dbo.spLoadDiskTable @maxRows INT, @VarString VARCHAR(200)

AS

BEGIN

SET NOCOUNT ON

DECLARE @i INT = 1

WHILE @i <= @maxRows

BEGIN

INSERT INTO dbo.DiskTable VALUES(@i, @VarString, GETDATE())

SET @i = @i+1

END

END

GO

2. Now create an In-Memory table & a Native Compiled Stored Procedure to load data:

-- Create an In-Memory table:

CREATE TABLE dbo.MemOptTable

(

ID INT NOT NULL

PRIMARY KEY NONCLUSTERED HASH WITH (BUCKET_COUNT = 10000),

VarString VARCHAR(200) NOT NULL,

DateAdded DATETIME NOT NULL

) WITH (

MEMORY_OPTIMIZED = ON,

DURABILITY = SCHEMA_AND_DATA

)

GO

-- Create Native Compiled Stored Procedure to load data into above Table:

CREATE PROCEDURE dbo.spLoadMemOptTable @maxRows INT, @VarString VARCHAR(200)

WITH

NATIVE_COMPILATION,

SCHEMABINDING,

EXECUTE AS OWNER

AS

BEGIN ATOMIC

WITH (TRANSACTION ISOLATION LEVEL=SNAPSHOT, LANGUAGE='us_english')

DECLARE @i INT = 1

WHILE @i <= @maxRows

BEGIN

INSERT INTO dbo.MemOptTable VALUES(@i, @VarString, GETDATE())

SET @i = @i+1

END

END

GO

–> Now we will try to Load 10k record in above 2 table in various ways, as follows:

1. Load Disk based Table by T-SQL script using a WHILE loop.

2. Load the same Disk based Table by Stored Procedure which internally uses a WHILE loop.

3. Load In-Memory Table by T-SQL script using a WHILE loop.

4. Load the same In-Memory Table by Native Compiled Stored Procedure which internally uses a WHILE loop.

–> Working with Disk based Tables:

SET NOCOUNT ON

DECLARE

@StartTime DATETIME2,

@TotalTime INT

DECLARE

@i INT,

@maxRows INT,

@VarString VARCHAR(200)

SET @maxRows = 10000

SET @VarString = REPLICATE('a',200)

SET @StartTime = SYSDATETIME()

SET @i = 1

-- 1. Load Disk Table (without SP):

WHILE @i <= @maxRows

BEGIN

INSERT INTO dbo.DiskTable VALUES(@i, @VarString, GETDATE())

SET @i = @i+1

END

SET @TotalTime = DATEDIFF(ms,@StartTime,SYSDATETIME())

SELECT 'Disk Table Load: ' + CAST(@TotalTime AS VARCHAR) + ' ms (without SP)'

-- 2. Load Disk Table (with simple SP):

DELETE FROM dbo.DiskTable

SET @StartTime = SYSDATETIME()

EXEC spLoadDiskTable @maxRows, @VarString

SET @TotalTime = DATEDIFF(ms,@StartTime,SYSDATETIME())

SELECT 'Disk Table Load: ' + CAST(@TotalTime AS VARCHAR) + ' ms (with simple SP)'

–> Working with In-Memory Tables:

-- 3. Load Memory Optimized Table (without SP): SET @StartTime = SYSDATETIME() SET @i = 1 WHILE @i <= @maxRows BEGIN INSERT INTO dbo.MemOptTable VALUES(@i, @VarString, GETDATE()) SET @i = @i+1 END SET @TotalTime = DATEDIFF(ms,@StartTime,SYSDATETIME()) SELECT 'Memory Table Load: ' + CAST(@TotalTime AS VARCHAR) + ' ms (without SP)' -- 4. Load Memory Optimized Table (with Native Compiled SP): DELETE FROM dbo.MemOptTable SET @StartTime = SYSDATETIME() EXEC spLoadMemOptTable @maxRows, @VarString SET @TotalTime = DATEDIFF(ms,@StartTime,SYSDATETIME()) SELECT 'Disk Table Load: ' + CAST(@TotalTime AS VARCHAR) + ' ms (with Native Compiled SP)' GO

–> Output (Loaded 10k records):

Disk based Table Load : 28382 ms (without SP) Disk based Table SP Load : 8297 ms (with simple SP) In-Memory Table Load : 5176 ms (without SP) In-Memory Table SP Load : 174 ms (with Native Compiled SP)

So, you can clearly see the benefit and multifold increase in performance by using In-Memory Tables & Native Compiled Stored Procedures. The graph below shows performance in visual bar charts, impressive, isn’t it?

–> Final Cleanup

DROP PROCEDURE dbo.spLoadDiskTable DROP TABLE dbo.DiskTable DROP PROCEDURE dbo.spLoadMemOptTable DROP TABLE dbo.MemOptTable GO

Update: Know more about In-Memory tables: